How to Optimize HubSpot Blog Content for Answer Engines

Multiple industry studies and platform updates now show that AI-generated responses are changing click behavior, citation patterns, and content...

A team of data-driven marketers obsessed with generating revenue for our clients.

Because the proof is in the pudding.

At Campaign Creators we live by three principles: Autonomy, Mastery, Purpose.

9 min read

Campaign Creators

:

05/12/26

Campaign Creators

:

05/12/26

More people are starting to search through AI tools instead of scrolling through traditional search results. Buyers now ask platforms like OpenAI ChatGPT, Google Gemini, and Perplexity AI for recommendations, comparisons, and answers directly inside a conversation. Because of this, marketers are beginning to look beyond rankings and traffic alone to understand whether their brand is actually appearing in AI-generated responses.

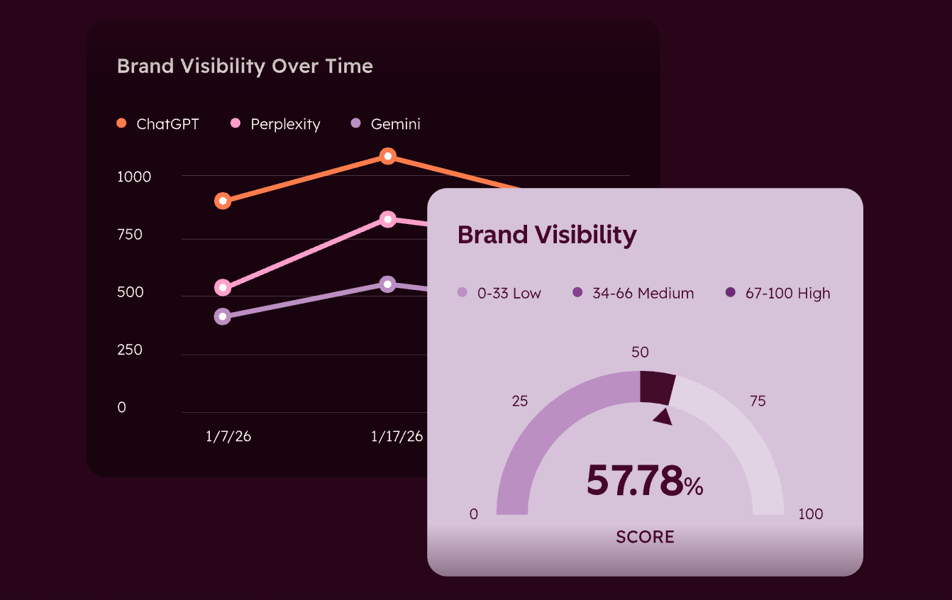

This is where AI Visibility Scores come in. An AI Visibility Score measures how frequently and prominently a brand is mentioned across AI answer engines for relevant industry questions and prompts. The growing importance of these scores has also led platforms like HubSpot to launch tools such as HubSpot AEO.

In this article, you’ll learn what AI Visibility Scores are, how they are calculated, what counts as a good score, and how marketers are using them to improve AI search visibility.

An AI Visibility Score (AVS) is a normalized 0 to 100 metric that measures how frequently and prominently a brand is cited or recommended across AI answer engines such as ChatGPT, Perplexity, Gemini, and other AI platforms when users ask questions relevant to a specific product or service.

A comprehensive visibility score typically aggregates several qualitative and quantitative signals to determine a brand's overall presence:

Together, these signals help quantify how visible and competitive a brand is across AI-driven search and recommendation platforms.

The calculation begins with identifying a set of 20 to 30 target prompts. These are not branded queries (e.g., "What is [Company Name]?"). They are unbranded questions a real buyer would ask during the research process.

Once the prompt set is established, each prompt is tested across multiple AI answer engines to measure how consistently a brand appears across platforms. To keep the score statistically stable and reflective of broader market visibility, prompts are run across AI models such as ChatGPT, Gemini, Perplexity, and Claude.

Each response from every AI tool is then evaluated using a 0 to 5-point scoring system based on the brand's prominence within the answer.

After all responses are scored, the final AI Visibility Score is calculated by dividing the total raw score by the maximum possible score and multiplying the result by 100.

Formula: (Total Raw Score / Maximum Possible Raw Score) x 100.

For example, if you track 20 prompts across 4 AI tools, there are 80 scoring events. At 5 points per event, the maximum possible raw score is 400. If your brand earns 136 points across those events, your AVS is 34.

HubSpot’s Answer Engine Optimization (AEO) is a toolset you can use to obtain a Brand Visibility Score. This score rolls up several critical metrics, including platform coverage, mention frequency, citation rate, and sentiment into one directional indicator that can be used for internal reporting and competitive benchmarking.

The toolset monitors brand presence across three primary AI platforms: ChatGPT, Gemini, and Perplexity. For each engine, it tracks four core signals:

The toolset transitions from data to strategy via its Recommendations tab. It generates a prioritized backlog of content tasks. These recommendations identify specific areas where competitors are cited and suggest optimizations such as creating listicles, adding FAQ schemas, or developing "answer-first" content structures to help AI extract and cite your information more effectively.

A good AI visibility score is not a universal constant. It depends heavily on your industry maturity, category density, and competitive landscape. However, based on benchmarks from B2B SaaS engagements and diagnostic tools, we can categorize scores into specific stages of visibility and authority.

A good score is also relative to your vertical's competitive density. In crowded markets like Project Management or CRM, reaching a score of 50 is significantly more difficult due to the volume of established citation signals from competitors.

A brand in a specialized field with fewer active competitors can often reach an AVS of 70 or above within six months with dedicated optimization efforts.

For those using HubSpot's diagnostic tool, a score between 70 and 100 suggests a brand is "well-represented," while 40 to 69 indicates recognition exists but significant gaps remain (the range where most mid-sized businesses currently land).

The most immediate application of an AI Visibility Score is performing gap analysis. By examining specific prompts where a brand is absent or only cited as a secondary mention, marketers can identify exactly where their "Citation Engineering" coverage is missing.

Marketers also use AVS to measure their Share of Voice relative to competitors within the same category.

Marketers use the score to evaluate the quality of their brand's reputation. Because AVS factors in sentiment analysis and citation depth, it reveals whether AI models trust the brand enough to provide it as a primary recommendation or merely a passing reference.

High scores in these qualitative dimensions indicate that a brand's digital PR and social proof efforts are successfully training AI models to view the brand as a source of truth.

AI models retrieve information in chunks, so content must be designed for multi-engine retrieval rather than just ranking on a results page.

Content structure alone is not enough. AI systems also rely heavily on machine-readable signals and consistent entity recognition to determine whether a brand is trustworthy and contextually relevant.

Beyond on-site optimization, AI visibility is also shaped by how often a brand is referenced and reinforced across the broader web ecosystem. Models frequently learn authority patterns from external citations, discussions, and recurring mentions.

As AI search continues evolving, marketers will likely need to combine traditional SEO practices with stronger entity optimization, structured content design, and external authority signals.

The process of calculating a score often involves a subjective judgment call. For example, determining whether a brand is a "primary recommendation" (5 points) or a "secondary mention" (3 points) requires a scorer to interpret the AI's intent. If a brand does not use a single, fixed scorer or a strictly defined rubric, the resulting trend data can easily become inconsistent and untrustworthy.

AI engines do not provide the same answer twice, a characteristic known as being non-deterministic. Small changes, such as adding a space at the end of a prompt, can alter a model's response. Because of this measurement noise, marketers must run multiple samples per prompt to find a reliable average, as a single snapshot or screenshot is often unrepresentative of true visibility.

AVS is a leading indicator, not a lagging one. It measures the conditions for success but does not directly measure revenue attribution or lead generation. There is typically a 6 to 12-week lag between a rising visibility score and the appearance of an attributable AI-sourced pipeline. Marketers who expect immediate revenue correlation may dismiss the metric as a vanity number prematurely.

A brand's score can decline through no fault of its own. AVS can drop if:

AI Visibility Scores attempt to solve a problem that traditional tools cannot fully see, but they still face attribution blind spots. Since many users copy-paste URLs from AI tools, search for brands separately, or continue browsing without clicking tracked links directly, some marketers estimate that up to 60% of the AI-assisted discovery journey may remain invisible in standard SEO analytics platforms, even when a brand has strong AI visibility.

Because of these limitations, AI visibility scores work best as a complementary metric with traditional SEO performance indicators. Organic rankings, search traffic, conversions, backlinks, and engagement metrics still provide valuable signals that AI visibility tools cannot fully capture on their own.

AI Visibility Scores are becoming a new way for marketers to understand whether their brand is showing up inside AI-generated answers across platforms. As more buyers rely on conversational AI for recommendations and research, visibility inside these responses may influence how brands are discovered long before a user visits a website or clicks a search result.

Tools like HubSpot AEO are giving marketers new ways to measure and monitor this shift, and businesses can also use these tools more effectively with guidance from HubSpot experts and implementation partners familiar with AI search optimization.

At Campaign Creators, we help businesses implement HubSpot AEO strategies that strengthen AI visibility, improve attribution tracking, and optimize content for AI-generated discovery.

The most important AI platforms currently include OpenAI ChatGPT, Google Gemini, Perplexity AI, and Anthropic Claude because they are among the most widely used AI answer engines for research, recommendations, and product discovery.

Yes, small businesses can still compete in AI search visibility because AI tools often prioritize relevance, structured answers, expertise, and trusted citations rather than company size alone.

Most businesses should track their AI Visibility Score weekly or monthly because AI-generated results can change frequently due to model updates, new content, competitor activity, and shifting citation patterns.

AI Visibility Scores can act as an early indicator of future lead generation because stronger visibility often increases brand exposure during the research phase before users visit a website directly.

AI tools are more likely to cite content that provides direct answers, structured formatting, original research, statistics, comparison data, FAQs, and clearly organized topical information. Pages with strong authority signals and machine-readable schema markup also tend to perform better in AI-generated responses.

Traditional SEO focuses on improving rankings in search engine results pages, while AEO focuses on increasing visibility inside AI-generated answers and conversational search experiences. GEO (Generative Engine Optimization) is a broader term often used to describe optimizing content specifically for generative AI systems and retrieval-based AI platforms.

Multiple industry studies and platform updates now show that AI-generated responses are changing click behavior, citation patterns, and content...

Traditional search is undergoing a major shift as buyers move away from scrolling through links and toward asking AI for direct recommendations,...

ChatGPT, Google Gemini, and Perplexity are now part of the buying journey, influencing how customers research brands and make decisions. Because of...